Technology Transfer - since 1986

Leading Edge Information Technology Education

If you think education is expensive, try ignorance...

Derek Bok

Online Events

Due to time zones, events presented by American speakers will be spread over more days, and will take place in the afternoon from 2 pm to 6 pm Italian time

Introduction to Generative AI for Java Developers

ONLINE LIVE STREAMING

May 13 - May 14, 2024

By: Frank Greco

Building a Competitive Data Strategy for a Data-Driven Enterprise

ONLINE LIVE STREAMING

May 17, 2024

By: Mike Ferguson

Practical Guidelines for Designing Modern Data Architectures

ONLINE LIVE STREAMING

May 23 - May 24, 2024

By: Rick van der Lans

Data Quality: A “must” for the Business Success

ONLINE LIVE STREAMING

May 27 - May 28, 2024

By: Nigel Turner

The Data Lakehouse Approach to Modern Data Architecture

ONLINE LIVE STREAMING

May 31, 2024

By: John O'Brien

Upcoming Events

April 2024

Upcoming events by this speaker:

May 13-14, 2024 Online live streaming:

Introduction to Generative AI for Java Developers

Generative AI and Enterprise Java Developers (Second Part)

Effective Communication with Generative AI

We certainly know that Generative AI is a groundbreaking field that has evolved rapidly, offering incredible potential for creativity and problem-solving. Communicating effectively with these models is critical and involves mastering the art of prompt engineering.

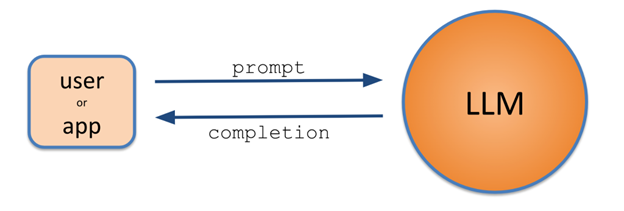

At the core of interacting with generative AI is the concept of prompts and completions. A prompt is the input that is provided to the model, guiding it to generate relevant and coherent outputs. Those outputs returning from the LLM are called completions, i.e., the LLM effectively says, “I will use your prompt starting point and complete a string of words based on the most probable ones that I have learned”.

Understanding the relationship between prompts and completions is key to getting an LLM to generate the desired result. Crafting prompts with precision is vital for obtaining desired and meaningful completions.